11 May The Value & Extensibility of Microservices Architecture

What is Microservices Architecture?

Microservices architecture is a specific approach to building applications. Essentially, microservices ensure that a team’s changes won’t break the entire application. How? By being distributed and loosely coupled, modern microservices architecture enables rapid, linear scaling and extensibility. Microservices allow developers to build new components of applications to meet the changing demands of customers and the market. For example, have you ever purchased a product online? If so, you most likely added an item to your virtual shopping cart. This action represents a microservice. Even actions like using a search bar in a website is an example of microservices hard at work.

Additionally, microservices are essential components of container-native storage (CNS). The flexibility and extensibility of microservices architecture future-proof the CNS enabling organizations to grow quickly. Read on to learn how microservices architecture works and why it may just be the saving grace your organization needs.

Extensibility of Microservices for Kubernetes Native Storage

In today’s digital world, technology evolves quickly. So, the foundation of Kubernetes Native Storage is microservices architecture that is 100% adaptable. Ultimately, microservices enable the K8sNS platform to be agile and flexible.

Microservices-based applications do not perform well on monolithic storage engines. Monolithic storage engines are not container aware meaning they treat workloads as traditional applications running in virtual machines which could create an obstruction in the workflow. Additionally, monolithic architecture creates large volumes whereas microservices running in Kubernetes warrant many smaller volumes. On the other hand, dynamic architecture enables enterprises to rapidly scale up or down as well as deploy services across data centers and cloud platforms. This allows the organization more flexibility and agility for deploying applications and managing data.

Ultimately, with microservices, changes in the tech stack or the environment don’t mean that you need to re-write code or make major application changes. Microservices provide instant extensibility so your IT team can rest easy at night knowing the application won’t falter. Now that you know a bit more about why microservices architecture is necessary for any organization that runs on Kubernetes, let’s dive into just how it works.

How Does Microservices Architecture Work?

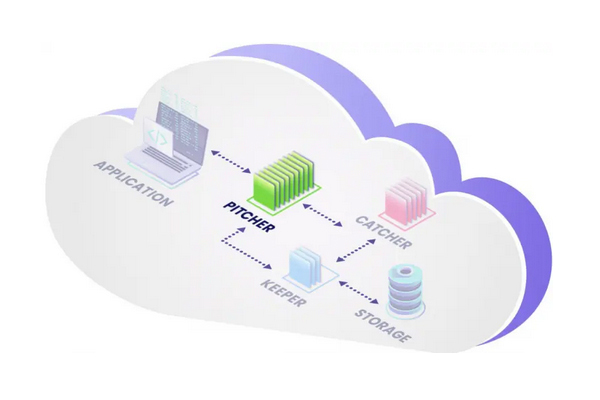

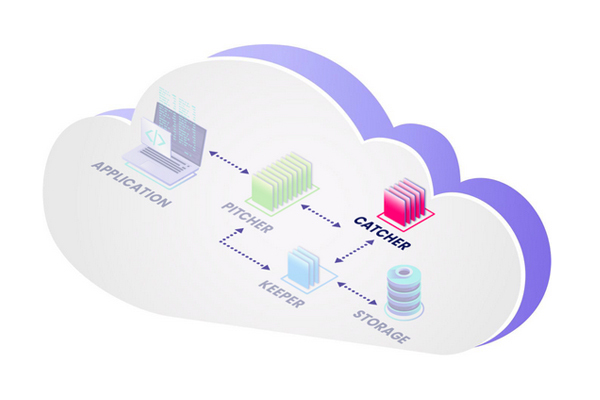

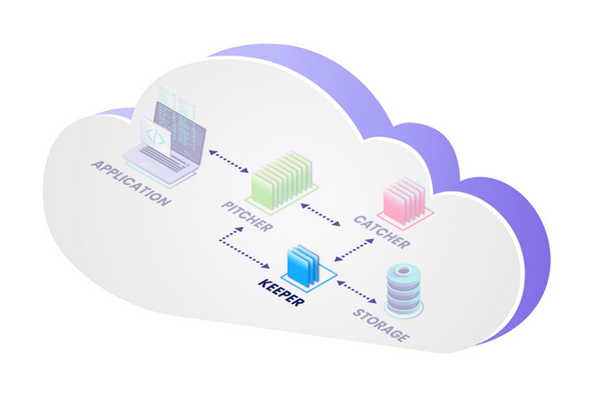

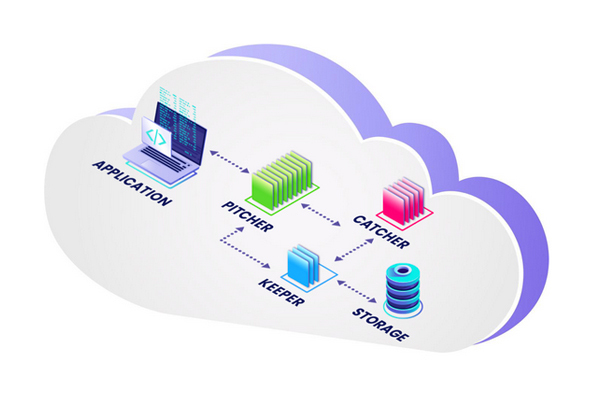

The ionir microservices architecture is separated into three modules: the Pitcher, Catcher, and Keeper. Sounds like a crossover between baseball and Quidditch, doesn’t it? Well, although microservices architecture is not a sport, the pitcher, catcher, and keeper do work in strategic alignment as a team. Below are in depth descriptions of how each module works and how it benefits an organization’s data storage management.

Pitcher

Basically, the pitcher is the gateway from an application to the rest of the system. The pitcher maintains and updates the most recent view of logical objects and generates a worldwide, unique name for the data. This enables the data to be called upon at any point in time in any location. Additionally, the pitcher compresses data and provides information about the relationships between all transactions and the data itself. For each emulation — block of data, file, object, etc. — there is one type of pitcher

Basically, the pitcher is the gateway from an application to the rest of the system. The pitcher maintains and updates the most recent view of logical objects and generates a worldwide, unique name for the data. This enables the data to be called upon at any point in time in any location. Additionally, the pitcher compresses data and provides information about the relationships between all transactions and the data itself. For each emulation — block of data, file, object, etc. — there is one type of pitcher

Main functions:

- Application/host interface

- Front-end processing

- In-line compression

Catcher

The catcher is the heart of the entire system. Essentially, the catcher orchestrates tiering decisions and determines where data should be stored on physical blocks. It stores and manages metadata by saving the name of the data along with the context and time. Also, the catcher retrieves the name for a given context at a given time enabling data mobility throughout the enterprise.

The catcher is the heart of the entire system. Essentially, the catcher orchestrates tiering decisions and determines where data should be stored on physical blocks. It stores and manages metadata by saving the name of the data along with the context and time. Also, the catcher retrieves the name for a given context at a given time enabling data mobility throughout the enterprise.

Main functions:

- Metadata management

- Data management

- Tiering

Keeper

Finally, the keeper (not to be confused with Gryffindor’s Quidditch keeper, Ron Weasley) is the interface to the storage and media. There is one type of keeper per media type such as SSD, Objects, Blob, etc. The keeper aggregates media resources of the same type and manages them as a single pool. Primarily, the keeper stores data on media and supports the retrieval of the data by name. In addition, the keeper deduplicates data and maintains redundancy across the managed pool.

Finally, the keeper (not to be confused with Gryffindor’s Quidditch keeper, Ron Weasley) is the interface to the storage and media. There is one type of keeper per media type such as SSD, Objects, Blob, etc. The keeper aggregates media resources of the same type and manages them as a single pool. Primarily, the keeper stores data on media and supports the retrieval of the data by name. In addition, the keeper deduplicates data and maintains redundancy across the managed pool.

Main functions:

- Media/storage interface

- Manages data redundancy

Microservices Work Together to Execute Kubernetes Native Storage

Modern enterprises call for modern data storage solutions. When applications based on microservices are run in the context of hybrid and multi-cloud, enterprises should consider adding a storage layer that’s aligned with modern architecture.

Modern enterprises call for modern data storage solutions. When applications based on microservices are run in the context of hybrid and multi-cloud, enterprises should consider adding a storage layer that’s aligned with modern architecture.

When working in unison, microservices execute Kubernetes Native Storage (K8sNS) and manage an entire enterprise’s data. By way of microservices, Kubernetes Native Storage pools local physical media capacity and presents virtual volumes to applications. K8NS eliminates data gravity by enabling instant movement of data to and from any cluster anywhere and instant access to any point in time.

Final Thoughts

Adding new microservices without disrupting the platform or requiring changes to the broader environment creates a future-proofing approach to K8sNS. ionir software is built on a modern microservices architecture, enabling rapid, linear scaling and extensibility. Is your organization in need of microservices architecture?

Contact our team to learn more about how microservices can future-proof your enterprise.